At the Large Hadron Collider, some serious science goes down. So serious, in fact, that the facility plans to ratchet up its data collection to the point where it’s creating a staggering 400PB of data every year.

So far, in its entire lifespan, the LHC has generated an archive of 100PB, and that total is currently growing at 27PB per year. But by 2023, Cern’s infrastructure manager Tim Bell expects the facility to churn out 400PB — that’s 400,000,000GB. An insane amount of data.

The European scientists are squaring up to the problem by using Openstack — shifting its data storage to servers in Budapest, rather than upgrading Cern’s existing data centre. “There are now four OpenStack clouds at Cern, the largest comprising 7,000 cores on approximately 3,000 servers, but this is expected to pass 150,000 cores in total by the first quarter of 2015,” explained Bell. That figure will still have to grow at a rate if it’s to cope come 2023. [V3]

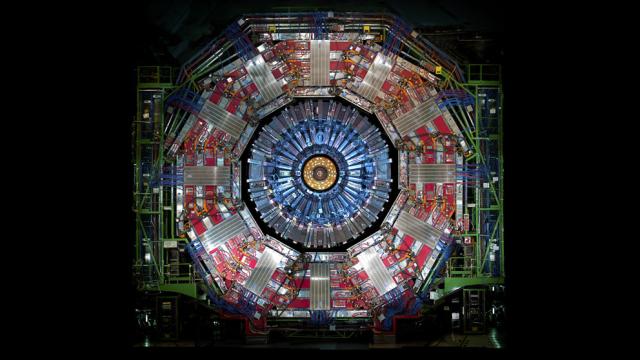

Image by Phil Plait under Creative Commons licence