On March 29, 2023, more than 500 top technologists and business leaders signed onto an eye-catching open letter begging artificial intelligence labs to immediately pause all training on any AI systems more powerful than Open AI’s GPT-4 for at least six months. The consequences for ploughing ahead without taking a breather, they warned, would cause “profound risks to society and humanity.” Luckily, the world listened and the word “AI” has vanished into our collective memory holes.

Just kidding. Advances in AI most certainly have not stopped, paused, hiccuped, or done anything other than abruptly accelerate forward in the preceding six months. In October 2023, just about any startup or business even remotely connected to technology has tried to figure out ways to add ChatGPT-style chatbots or AI image generators into their pitch to consumers. AI companies like OpenAI have ploughed ahead with newer models and greater capabilities while others, like Meta and Amazon, have shifted their priorities to pour resources into the brewing AI tech race. The so-called pause was more like a firing gun.

Below are some of the biggest advancements in AI that have occurred in the six months since Future of Life Institute published its letter. Many of these touchstones are technical in matter, but others involve the way civil society, content creators, and lawmakers have responded to this quickly evolving world.

Amazon Invested $US4 Billion in Anthropic

Amazon, one of the fiercest titans in the last tech race, was surprisingly quiet during the first months of the generative AI explosion. That all changed in September when Amazon loudly announced it would pour up to $US4 billion, yes billion, into the AI startup Anthropic.

The hefty investment news came just one week after the company revealed its plans to integrate LLMs into its future Alexa home assistants. The e-commerce giant announced its new smart home speaker would come equipped with an LLM “custom-built and specifically optimized for voice interactions, and the things we know our customers love—getting real-time information, efficient smart home control, and maximising their home entertainment.” It will also reportedly use audio collected from the device to train future AI models.

Elon Launched His Own AI Company Months After Calling For Pause

Billionaire Elon Musk isn’t exactly known for his consistency but this contradiction might take the cake. Just four months after adding his name to the list of industry heavyweights calling for an AI pause to save humanity Musk decided to, yes you guessed it, launch an AI company of his own. The new venture, called xAI, seeks to create a chatbot to compete with OpenAI’s ChatGPT and Google’s Bard.

Sam Altman Began His D.C. Charm Offensive

The rapid ascent of LLM models into mainstream consciousness has rightfully welcomed a fair degree of anxiety and calls for regulation. OpenAI CEO Sam Altman, arguably the biggest name in the current AI race, tried to get out ahead of those concerns in a slick, widely reported Congressional hearing in May. Altman walked away managing to simultaneously scare the crap out of lawmakers and reassuringly convince them that he was open to meaningful regulation.

During the hearing, Altman compared the new era of generative AI to Photoshop “but on steroids.” The founder went on to advocate for a licensing regime that generative AI creators would need to go through before being able to release certain products to the public. Altman won the favor of several lawmakers including Louisiana Sen. John Kennedy, who asked Altman if he would like to take on the role of the nation’s top AI regulator.

“I love my current job,” Altman replied.

WGA Writers Stood Up to AI-Generated Content

The sudden explosion of mainstream interest in ChatGPT-style chatbots and other generative AI tools has led creative types from numerous fields to fear the possibility their job might soon be outsourced to an untrusted bot. Those concerns were part of the components fueling a five-month strike by Hollywood writers part of the Writers Guild of America (WGA).

The WGA ultimately won out on AI and worked with studios to include this language in a summary of their new bargaining agreement.

“AI can’t write or rewrite literary material, and AI-generated material will not be considered source material,” the agreement read.

Meta Is Playing Chatbot Catchup With OpenAI

Meta, the company responsible for Facebook and Instagram, clearly had no interest in abiding by the six-month AI pause. Instead, it’s used that time to roll out a number of AI models and features and has plans to litter its social media sites with AI chatbot personas in the near future. Not to be outdone, sources speaking with The Wall Street Journal said Meta was preparing to release a powerful advanced AI chatbot capable with similar capabilities to OpenAI’s most powerful GPT models. Meta’s chatbot, according to the report, would be focused on assisting businesses with services including building sophisticated text, and analyses.

OpenAI’s GPT Gains the Ability to Hear and See

Not only did OpenAI not heed the letter’s plea to pause development, it actually moved forward to equip its once text-based GPT model with the ability to hear and see. In September the company announced its model would soon have speech-to-text and text-to-speech synthesisation capabilities and could recognize an image presented to it. The company released a video showing image recognition in action where a user submitted a photo of a bicycle and asked the AI to help them lower the seat.

Adobe Launched Its Firefly AI Illustrator

The big-name tech players weren’t the only ones ramping up their generative AI capabilities over the past six months. Adobe, which reshaped image editing in 1987 with the release of Photoshop, announced that it will make its own AI image generators available to subscribers. In addition to the generator, Adobe is also releasing an AI tool that can expand the borders of an image by creating out-of-frame content and another that uses AI for colour correction. All of those are included under Adobe’s “Firefly” tools.

Content Farms Are Already Using AI Chatbots to Plagiarize News

Society hasn’t completely crumbled to the ground yet as some AI doomers have may have predicted, but other less dramatic harms predicted by some of the AI bill signatories have. In particular, the current age of generative AI chatbots has already been used to churn out scores of low-quality news posts, some containing potentially harmful misinformation.

Researchers at NewsGuard earlier this year identified 37 websites that appeared to use AI to “scramble and rewrite” stories from mainstream publications and republish them for ad revenue. The content farms publishing the stories took genuine news articles written by publications like The New York Times and CNN and scrambled them to appear as if they were unique. Some of the websites appeared to be automated, essentially operating with no human involvement.

AI-Powered Robotaxis Had a Disastrous Month in San Francisco

This summer marked a pivotal inflection point for driverless cars. San Francisco, the beating heart of much of the industry, voted to allow Waymo and Cruise to operate their autonomous taxis anywhere in the city, all day. It was an immediate disaster.

Within a week of the new designation, one Crusie car lodged itself in a pool of cement. Others suffered WiFi failures that brought a city street to a standstill. More Promiscuous riders, meanwhile, turned the late-night autonomous vehicles into a personal sex palace.

OpenAI Admits AI Text Detectors Don’t Really Work

Scholars and lawmakers alike have cited the urgent need for detection systems to identify AI-generated text. The only problem is those defences aren’t really effective even when deployed. OpenAI, the firm responsible for ChatGPT, admitted as much in a recent blog post.

“Do AI detectors work?” the company rhetorically asked. “In short, no, not in our experience. Our research into detectors didn’t show them to be reliable enough given that educators could be making judgments about students with potentially lasting consequences.”

Still, that hasn’t stopped other ambitious startups from claiming they can do just that.

IRS Says It Will Use AI to Go After Tax Dodgers

Tech companies weren’t the only ones racing ahead with AI applications. In early September, the Internal Revenue Service announced it would use AI to identify and route out wealthy tax filers who use “sophisticated schemes to avoid taxes.” The IRS says the new AI-aided system will prioritise issuing audits for higher-income taxpayers who bring in more than $US1 million in earnings every year. AI will also be used to initiate investigations into 75 of the largest US partnerships.

Authors Sue AI Companies for Training Models on Pirated Books

The copious troves of mostly pirated written material used to train the current generation of large language models were always destined for legal challenges. Maybe the most interesting of those so far came by way of comedian Sarah Silverman and authors Christopher Golden and Richard Kadrey, who joined together in a lawsuit slamming OpenAI’s ChatGPT and Meta’s LLaMA for being trained on copyright materials

The authors claim the tech companies used text scraped from Library Genesis, Z-Library, Sci-Hub, and other online repositories that host content in violation of copyright rules. The Atlantic recently detailed more than 190,000 books included in the Books3 dataset which was reportedly used to train Meta’s LLaMA model.

Political Candidates Are Attacking Each Other With Silly Deepfakes

Political deepfakes, long feared but never quite realised, could play a major, potentially disruptive role in the upcoming 2024 presidential election. Both former president Donald Trump and Florida governor Ron DeSantis have already used AI-generated image models to trash each other during the Republican primary.

In the Trump example, he used deepfaked audio mimicking George Soros, Adolf Hitler, and the Devil himself to mock DeSantis’ shaky campaign announcement on Twitter Spaces. DeSantis fired back with deepfake images purporting to show Trump embracing former National Institute of Health Director Anthony Fauci.

Zoom Jumped on the AI Bandwagon Despite User Backlash

After a brief consumer backlash over confusing ways the company would use consumer data to train AI models, voice chat giant Zoom doubled down on its commitment to the tech in September.

The company announced it intended to move forward with its “AI Companion” which users can interact with to solve a variety of tasks like getting prepped for an upcoming meeting or writing up summaries of a past video session. Like a human assistant, the companion will also reportedly file support tickets and respond to questions about a video chat raised in real time.

Top Tech Leaders Head to Washington D.C.

Tech-interested lawmakers in Washington D.C. desperately want to avoid repeating the same mistakes they made during the social media era again in the new age of AI. In the past, politicians appeared both uninformed and uninterested in technology and let big-grinning, fast-rolling tech executives bull-doze over any accountability.

A cynic could say the same forces have been at work in the six months since the pause letter, but the optics are clearly different. New York Sen and Senate Majority Leader Chuck Schumer has already convened around half a dozen AI-specific hearings on Capitol Hill, all with the goal of developing smart, impactful AI regulation in a short period of time. Many of the tech industry’s most notable names, including Elon Musk and Mark Zuckerberg, even joined forces to contribute to a recent closed-door sit-down.

Even Coca-Cola Dove Into AI With New, Futuristic Soda

AI advancements certainly haven’t faltered in the past six months. In fact, interest in the space has grown so large it’s become a meme space for mega brands. Coca-Cola, the world’s most important soft drink provider, jumped in on the action in September by releasing an “AI-powered” which they say was co-created with a digital assistant to evoke a flavor associated with the year 3,000.

Gizmodo got its hands on the flashy new soda and, to put it bluntly, the fizzy marketing stunt fell flat. The future, as it turns out, tastes bland.

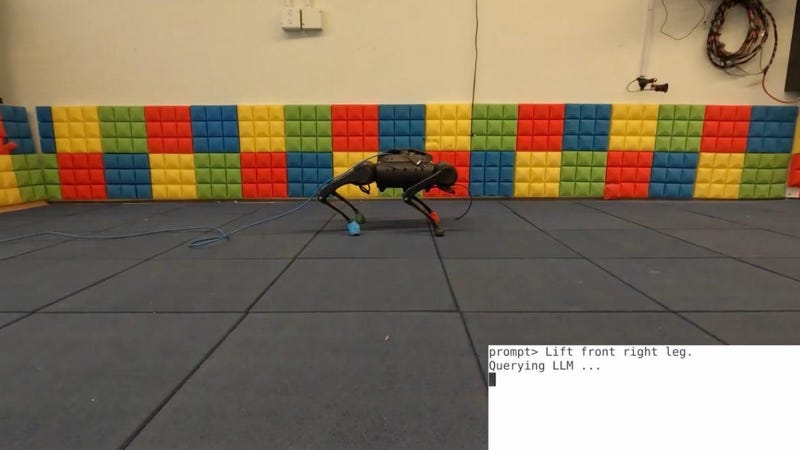

Google Engineers Taught a Robot Dog to Catch a Squirrel Using Generative AI

Paranoid observers of evolving AI tech may take some solace in the fact that nearly all of the recent mainstream attention has focused on LLMs limited to lifeless, harmless strings of code. Well, that’s not quite the case.

Researchers at Google DeepMind recently created a new AI model that can take complex human language commands and translate them into a form a robot dog could understand. Videos of the results show the researchers typing in commands like, “hop over here,” or “don’t grab that squirrel.” The language model interprets strings of words and converts them into the proper string of code that the machine dog can understand. Within moments, the robo-dog actually responds to the inputs in ways even a well-trained physical dog might not.