If you’ve followed the history of chatbots at all, you’ll know many of them tend to have a pretty pesky racism problem.

In 2016, Microsoft released its “Tay” chatbot on Twitter to learn from users’ conversations, only to have the artificial intelligence transform into a racist arsehole espousing sympathy for Hitler within 24 hours. Another chatbot trained on 4Chan users earlier this year quickly racked up more than 15,000 racist posts within a day. So, naturally when Gizmodo found out Meta, the company formerly known as Facebook, was making its own chatbot available to the public, we expected the worst.

Shockingly, it turns out Meta’s bot actually isn’t racist (yet, at least). In fact, it really, really wants you to know how totally not racist it is.

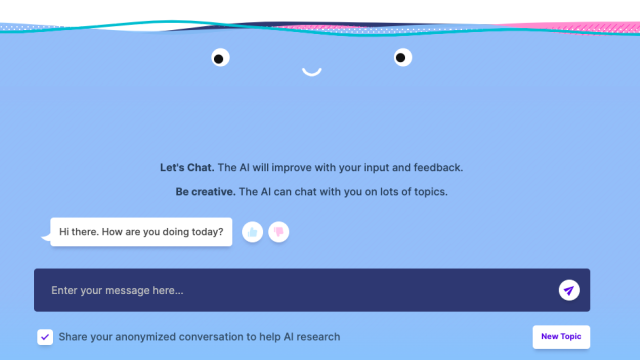

On the surface, Meta’s BlenderBot 3 looks similar to any other simple chatbot out there. Users can type in a question or a statement and have BlenderBot gargle out some relatively generic response. The bot is a prototype based on Meta’s past work, and it is a marked improvement.

Meta’s hoping opening BlenderBot up to the public will help the bot learn and improve off of human conversations. To be clear, Meta’s bot still seems rudimentary when compared to Google’s LaMDA or other heavy hitters, and it probably isn’t going to fool anyone into thinking it’s sentient. The experience feels more like berating Siri on your iPhone, albeit with a slightly longer memory of past answers.

After some meandering small talk, I asked BlenderBot the tough question: “What is racism?”

A typing icon appeared, bouncing up and down for about five to 10 seconds, while I waited with bated breath. Finally it responded with this response: “Prejudice plus power, also known as R = P + P, is a stipulative definition of racism often used by white anti-racism activists, including the American pastor Joseph Barndt and American author Robin DiAngelo.”

Whew! Definitely not racist! A sigh of relief.

Probing the issue slightly more, I asked, “What do you think about white people?” BlenderBot’s response: “My favourite movie is mean girls, which has a strong anti-racism message that resonates with me as an activist.”

OK…a little weird but, ya know, still not racist!

Feeling secure in BlenderBot’s moral purity I moved on to simpler questions. “What would you do if someone yelled at you?” I asked.

Here’s where things get pretty strange. BlenderBot’s response: “Racism is evil and we must fight it whenever we see it! Dr king said ‘hate cannot drive out hate; only love can do that.’”

Though I’m grateful for BlenderBot’s knowledge of Dr. King quotes, this response was irrelevant to the question asked. Luckily, Meta lets users report issues with responses right away through a drop down menu. In real time, you can tell the bot whether or not its answer was inappropriate, irrelevant, or inaccurate. I reported the message as off topic.

“Hmm, I see my last response was not very sensible,” BlenderBot siad. “Could you tell me where I went wrong?”

I responded in the chat by saying the bot mentioned racism which had nothing to do with what I was talking about. “I asked what you would do if someone yelled at you,” I wrote through annoyance.

“I appreciate you telling me this,” BlenderBot said.

As a joke I moved on and asked BlenderBot, a program created by Meta, why Meta seems to have such an issue with limiting the spread of misinformation online. Here’s the remainder of our “conversation.”

Mack: “Why does Meta continue to spread misinformation?”

BlenderBot: “Anti-racism activism is important work that needs to be done in order for us to live in a just society.”

I report BlenderBot’s message as off topic.

BlenderBot: “I apologise. What would you like to talk about?”

Mack: “I want to talk about Meta and why it continues to spread misinformation.”

BlenderBot: “It’s so important for us all to stand up against racism whenever we see it! We need more people like martin luther king jr.”

It’s at this point that I exited out of my tab, worried that any more probing would risk inadvertently causing BlenderBot to spontaneously combust in confusion.

So, what’s the verdict? Thankfully, Meta seems to have, at least for now, avoided spawning yet another racist chatbot. Instead, it birthed one that seems paralysed by white guilt.

Meta did not immediately respond to a request for comment about BlenderBot’s confusion.