Before Google’s latest I/O event could even begin, the tech giant was already trying to stake a claim for its new AI. As music artist Dan Deacon played trippy, chip tune-esque electronic vocals to the gathered crowd, the company displayed the work of its latest text-to-video AI model with a psychedelic slideshow featuring, people in office settings, a trip through space, and mutating ducks surrounded by mumbling lips.

The spectacle was all to show that Google is bringing out the big guns for 2023, taking its biggest swing yet in the ongoing AI fight. At Google I/O, the company displayed a host of new internal and public-facing AI deployments. CEO Sundar Pichai said the company wanted to make AI helpful “for everyone.”

What this really means is—simply—Google plans to stick some form of AI into an increasing number of user-facing software products and apps on its docket.

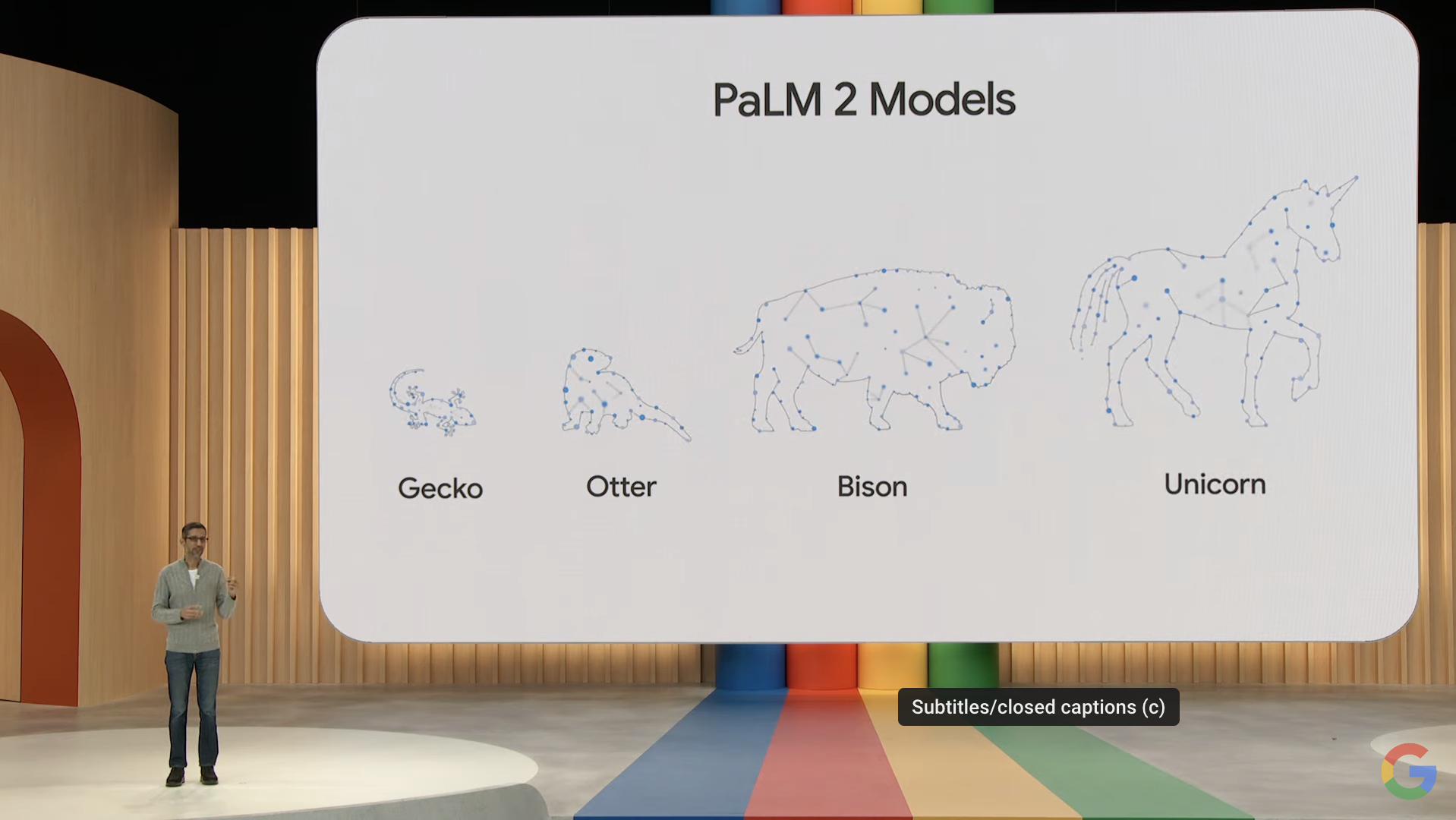

Google’s sequel to its language model, called PaLM 2

Google unveiled its latest large language model that’s supposed to kick its AI chatbots and other text-based services into high gear. The company said this new LLM is trained on more than 100 languages and has strong coding, mathematics, and creative writing capabilities.

The company said there are four different versions of PaLM, with the smallest “Gecko” version small enough to work on mobile devices. Pichai showed off one example where a user asked PaLM 2 to give comments on a line of code to another developer while translating it into Korean. PaLM will be available in a preview sometime later this year.

PaLM 2 is a continuation of 2022’s PaLM and March’s PaLM-E multimodal model already released earlier this year. In addition, its limited medical-based LLM caled Med-PaLM 2 is supposed to accurately answer medical questions. Google claimed this system achieved 85% accuracy on the US Medical Licensing Examination. Though there’s still enormous ethical questions to work out with AI in the medical field, Pichai said Google hopes Med-PaLM will be able to identify medical diagnoses by looking at images like an X-ray.

Google’s Bard upgrades

Google’s “experiment” for conversational AI has gotten some major upgrades, according to Google. It’s now running on PaLM 2 and has a dark mode, but beyond that, Sissie Hsiao, the VP for Google Assistant and Bard, said the team has been making “rapid improvements” to Bard’s capabilities. She specifically cited its ability to code or debug programs, since it’s now trained on more than 20 programming languages.

Google announced it’s finally removing the waitlist for its Bard AI, letting it out into the open in more than 180 countries. It should also work in both Japanese and Korean, and Hsiao said it should be able to accept around 40 languages “soon.”

Hsiao used an example where Bard creates a script in Python for doing a specific move in a game of Chess. The AI can also explain parts of its code and suggest additions to its base.

Bard can also integrate directly into Gmail, Sheets, and Docs, able to export text directly to those programs. Bard also uses Google Search to give images and descriptions in its responses.

Bard is also gaining connections to third-party apps, including Adobe Firefly AI image generator.

Even More AI in the Workspace apps

Google has already talked up adding AI content generation in Gmail and Google Docs, but now the company said it’s expanding the so-called Workspace AI collaborator to add even more generative capabilities in its cloud-based apps. These generative AI and “sidekick” features are being released on a limited basis, but will be handed out to a more broad userbase later this year.

Much like Microsoft announced with its own 365 apps earlier this year, Google’s adding generative AI into its office-style applications. Aparna Pappu, the VP of Google Workspace, said in addition to the limited deployment of “help me write” feature on Gmail and Docs, the company is adding new AI features to several Workspace apps, including Slides and Sheets. The spreadsheet application can generate generic templates based on user prompts, such as a client list for a dog-walking business.

Google announced its new Help me write feature, which uses an AI to generate a full email response based on previous emails. Users can then iterate on those emails to make them more or less elaborate. Pichai used the example of a user asking customer service for a refund on a canceled flight. This is on top of long-existing content generation abilities in Gmail like Smart Compose and Smart Reply.

For Slides, users can now use text-to-image generation to add to a slideshow. The generator creates multiple instances of an image, and users can further refine those prompts with different styles.

Google announced its new “Help me write” that uses an AI to generate a full email response based on previous emails. Users can then iterate on those emails to make them more or less elaborate. Pichai used the example of a user asking customer service for a refund on a cancelled flight. This is on top of long-existing content generation abilities in Gmail like Smart Compose and Smart Reply.

In Gmail, the AI “sidekick” can automatically summarise an email thread and can find cite earlier documents relating to that conversation. As far as Docs goes, the existing AI is getting more elaborate. It now suggests extra prompts based on generated text, and it can now add in AI-generated images directly within Docs. This also works within Slides, letting users generate speaker notes based on AI-generated summaries of each individual slide.

This article has been updated since it was first published.